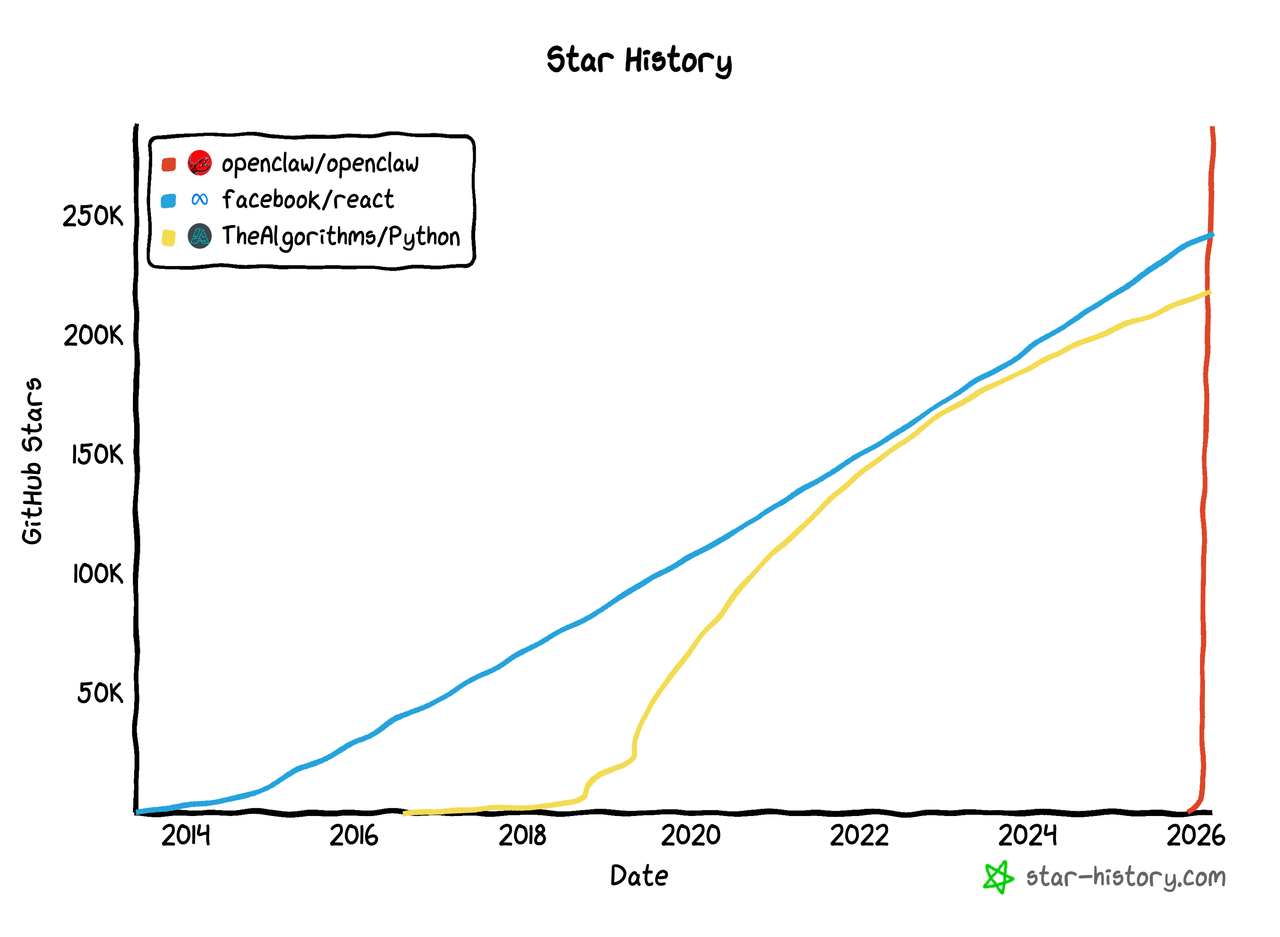

In recent months, OpenClaw has taken the AI world by storm. Media outlets, online communities, and developer forums have all been talking about it, sparking a wave of enthusiasm around “raising lobsters.” On GitHub’s AI topic page, its Star count has climbed all the way to the top.

💬 Hey, you’re reading the NocoBase blog. NocoBase is the most extensible AI-powered no-code/low-code development platform for building enterprise applications, internal tools, and all kinds of systems. It’s fully self-hosted, plugin-based, and developer-friendly. → Explore NocoBase on GitHub

Some media outlets have even described OpenClaw as “The Rise of a New King on GitHub.” But if we see it only as another viral open-source project, we may still be underestimating what this surge really signals. The rise of OpenClaw also reflects a broader shift in what people are paying attention to in open-source AI in 2026.

Last year, we also reviewed the 20 most popular open-source AI projects on GitHub. At the time, the main focus was still on model capability, chat interfaces, and whether open-source products could close the gap with closed-source user experiences. This year, however, the list looks very different. Attention is moving further toward practical application areas such as agentic execution, workflow orchestration, and multimodal generation.

With that shift in mind, we took another look at the 20 most-starred open-source AI projects on GitHub in 2026 and grouped them into a few broad categories. From that list, we selected several representative projects to highlight their core capabilities, product strengths, and distinct value in the evolving AI landscape.

20 open-source AI projects worth watching in 2026

The projects below are ranked by GitHub Stars

| Rank | Project Name | Star | Core Keyword | Target Users | One-line Summary | GitHub Link |

|---|---|---|---|---|---|---|

| 1 | OpenClaw | 302k | Agentic execution | Individuals | An open-source AI assistant for personal use, focused on cross-platform task execution | https://github.com/openclaw/openclaw |

| 2 | AutoGPT | 182k | Agentic execution | Developers | A classic autonomous agent project focused on task decomposition and autonomous execution | https://github.com/Significant-Gravitas/AutoGPT |

| 3 | n8n | 179k | Workflow orchestration | Enterprises | A workflow automation platform with native AI capabilities | https://github.com/n8n-io/n8n |

| 4 | Stable Diffusion WebUI | 162k | Multimodal generation | Creators | A classic web interface for Stable Diffusion | https://github.com/AUTOMATIC1111/stable-diffusion-webui |

| 5 | prompts.chat | 151k | Prompt resources | Individuals | An open-source prompt community and prompt library | https://github.com/f/prompts.chat |

| 6 | Dify | 132k | Workflow orchestration | Enterprises | A production-ready AI application platform built around agent workflows | https://github.com/langgenius/dify |

| 7 | System Prompts and Models of AI Tools | 130k | Research materials | Developers | A repository collecting system prompts, internal tools, and model information from various AI products | https://github.com/x1xhlol/system-prompts-and-models-of-ai-tools |

| 8 | LangChain | 129k | Workflow orchestration | Developers | A framework for building LLM applications and AI agents | https://github.com/langchain-ai/langchain |

| 9 | Open WebUI | 127k | Application interface | Individuals | An AI interface for models such as Ollama and the OpenAI API | https://github.com/open-webui/open-webui |

| 10 | Generative AI for Beginners | 108k | Learning resources | Developers | A structured course repository for beginners in generative AI | https://github.com/microsoft/generative-ai-for-beginners |

| 11 | ComfyUI | 106k | Multimodal generation | Creators | A node-based image generation interface and backend | https://github.com/Comfy-Org/ComfyUI |

| 12 | Supabase | 98.9k | Data and context | Enterprises | A data platform for web, mobile, and AI applications | https://github.com/supabase/supabase |

| 13 | Gemini CLI | 97.2k | Agentic execution | Developers | An open-source AI agent that brings Gemini into the terminal | https://github.com/google-gemini/gemini-cli |

| 14 | Firecrawl | 91k | Data and context | Developers | A web data interface that turns websites into LLM-ready data | https://github.com/firecrawl/firecrawl |

| 15 | LLMs from Scratch | 87.7k | Learning resources | Developers | A teaching project for building ChatGPT-like models from scratch | https://github.com/rasbt/LLMs-from-scratch |

| 16 | awesome-mcp-servers | 82.7k | Tool connectivity | Developers | An open-source directory of MCP servers | https://github.com/punkpeye/awesome-mcp-servers |

| 17 | Deep-Live-Cam | 80k | Multimodal generation | Creators | An open-source tool for real-time face swapping and video generation | https://github.com/hacksider/Deep-Live-Cam |

| 18 | Netdata | 78k | AI operations | Enterprises | A full-stack observability platform with built-in AI capabilities | https://github.com/netdata/netdata |

| 19 | Spec Kit | 75.7k | AI engineering | Developers | A toolkit for spec-driven development | https://github.com/github/spec-kit |

| 20 | RAGFlow | 74.7k | Data and context | Enterprises | A context engine that combines RAG and agent capabilities | https://github.com/infiniflow/ragflow |

As the table shows, these projects are not all the same kind of product. Learning resources, prompt collections, and research repositories are useful as supporting references, but if we want to understand the core trends in open-source AI this year, it makes more sense to focus on the most representative tools and platforms. That is why the rest of this article looks at four major directions: agentic execution, workflow orchestration, data and context, and multimodal generation.

Agentic execution

OpenClaw

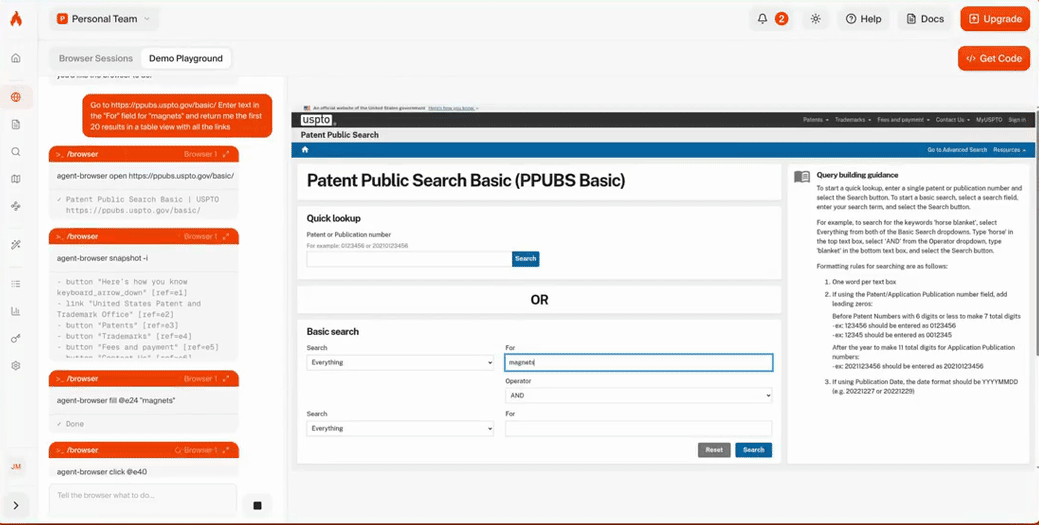

- GitHub link: https://github.com/openclaw/openclaw

- Official website: https://openclaw.ai

- GitHub Stars: 302k

Most people in the AI space are already familiar with OpenClaw, so we will keep this introduction brief.

OpenClaw is an open-source AI assistant designed for personal use. Its core idea is to bring AI directly into the communication channels people already use, instead of asking them to adopt a separate interface. It also functions as a self-hosted gateway, which means it runs on your own devices and under your own rules, making it especially appealing to developers and power users.

Core capabilities

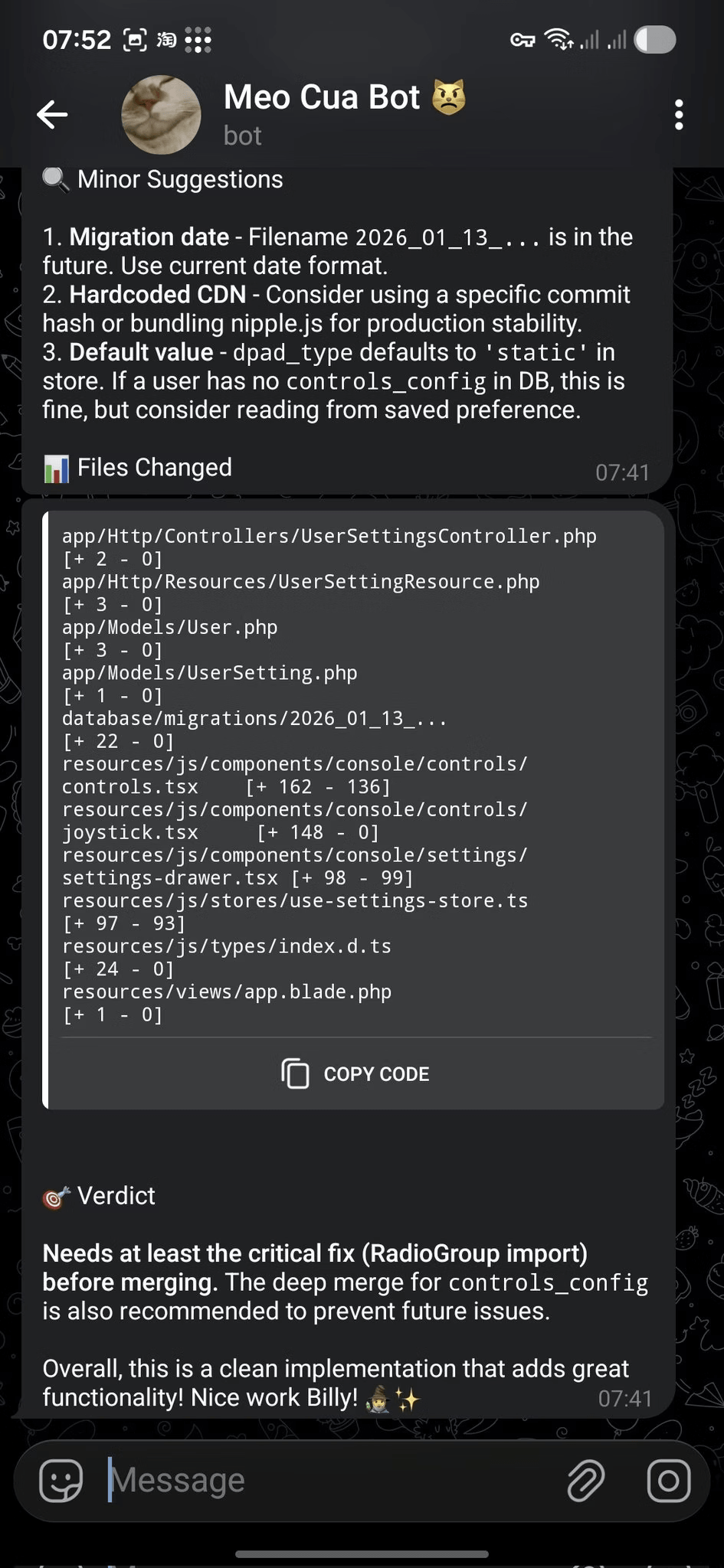

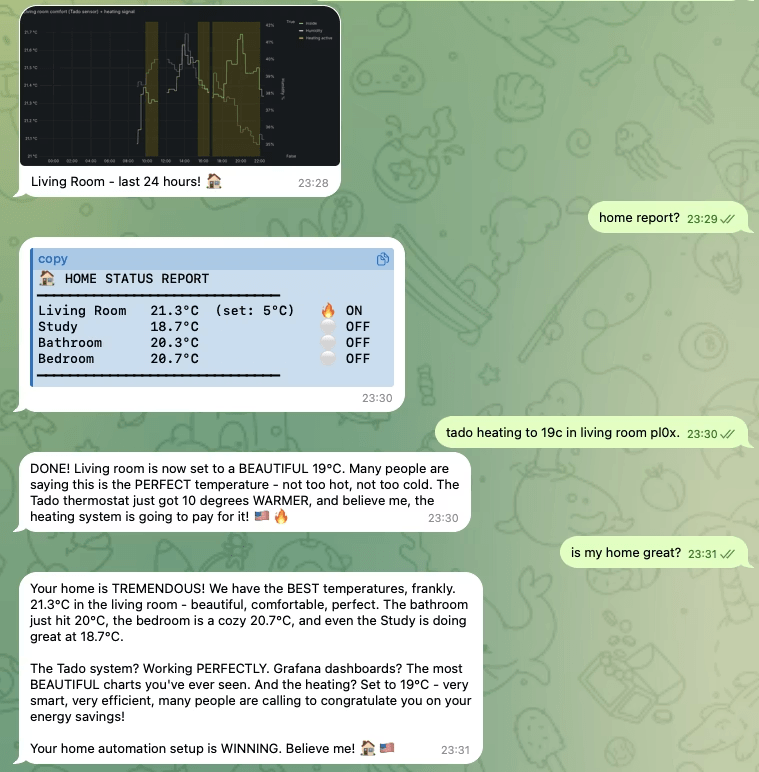

Bringing AI into existing messaging channels and device environments

OpenClaw can connect to WhatsApp, Telegram, Discord, iMessage, Feishu, and other channels, handling messages, conversations, and routing through a single gateway. It also supports voice activation, continuous voice interaction, Live Canvas, and multi-platform node support across iOS, Android, and macOS. As a result, AI is no longer confined to a chat window. It can work directly across messages, devices, and user interfaces.

Always-on architecture with room to grow

OpenClaw can run a gateway process on a local machine or server, then continuously receive and respond to requests through different messaging channels. It also supports plugin-based extensions, so beyond its default capabilities, it can be expanded to support more channels and functions such as Mattermost.

AutoGPT

- GitHub link: https://github.com/Significant-Gravitas/AutoGPT

- Official website: https://agpt.co

- GitHub Stars: 182k

AutoGPT is an open-source project built around AI agents. Its value lies not just in offering a ready-to-use assistant, but in turning agent creation, deployment, and runtime management into a more complete platform. Compared with tools built for narrow or one-off use cases, it is more focused on helping agents move from experimental demos to long-running, extensible systems.

Core capabilities

A more complete platform for building, deploying, and running agents

AutoGPT is focused on bringing agent building, deployment, management, and operation into a unified system. It is no longer just an early autonomous agent experiment. It is evolving into a broader agent platform.

Support for persistent operation and long-running tasks

AutoGPT supports continuously running agents and has expanded into related platform, marketplace, and capability modules. That makes it better suited for automation and long-term task handling. Compared with products aimed more at personal assistant use cases, it is closer to the needs of developers, platform teams, and enterprise builders.

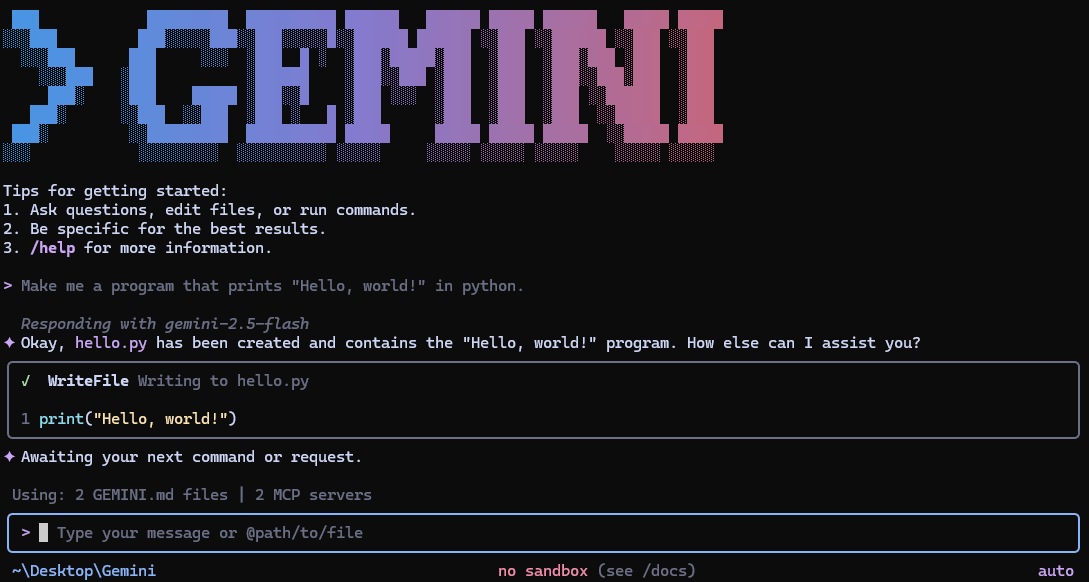

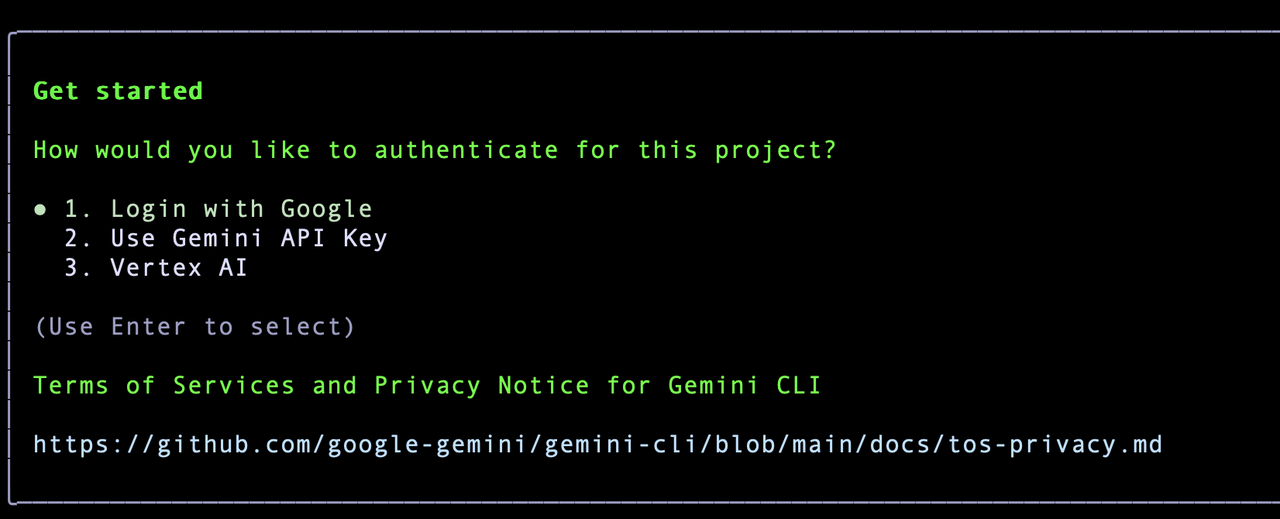

Gemini CLI

- GitHub link: https://github.com/google-gemini/gemini-cli

- Official website: https://geminicli.com

- GitHub Stars: 97.2k

Gemini CLI is Google’s open-source AI agent tool for the command line. Its core form is simple: it brings Gemini directly into the terminal. Compared with products built around chat interfaces, it fits more naturally into developers’ day-to-day workflow, especially in scenarios involving local project context, command-line operations, and ongoing task execution.

Core capabilities

Bringing AI directly into the terminal and local project environment

Gemini CLI can call Gemini directly from the terminal to support code understanding, task automation, and workflow construction. It can also work with local project context, allowing AI to do more than answer questions. It can actively participate in real tasks involving code, commands, and files.

Designed for continuous development workflows

It follows a reason-and-act approach, supports built-in tools as well as local or remote MCP servers for more complex tasks, and also allows custom slash commands.

Workflow orchestration

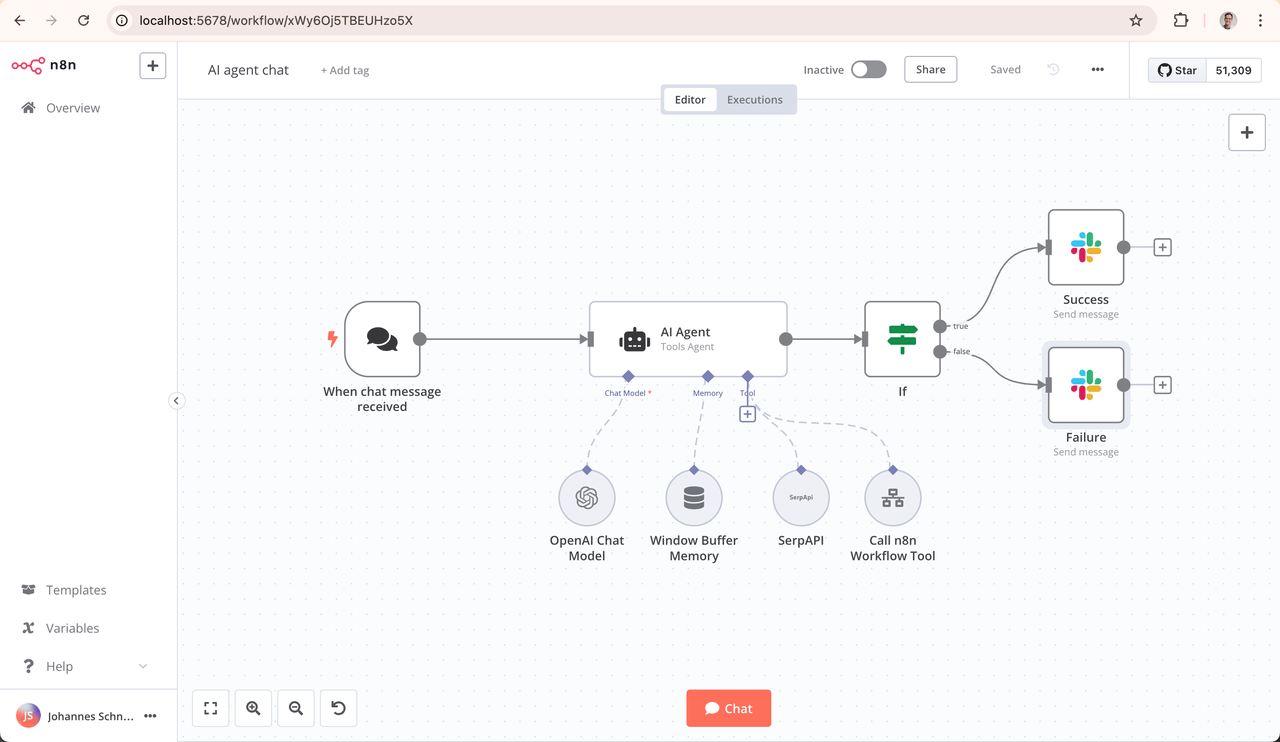

n8n

- GitHub link: https://github.com/n8n-io/n8n

- Official website: https://n8n.io

- GitHub Stars: 179k

n8n is a workflow automation platform that combines visual orchestration, code extensibility, and AI capabilities in a single workflow system. Compared with tools focused on standalone agents or single-model integrations, it is better suited to connecting models, data sources, external tools, and business processes into automation pipelines that can run continuously.

Core capabilities

Embedding AI into end-to-end workflows

n8n lets users build workflows on a visual canvas while still supporting code-based customization. That combination makes it suitable for both rapid setup and deeper tailoring. It can connect data sources, AI models, and external tools, allowing business process automation and AI workflows to live inside the same system.

Making AI a real part of the workflow system

n8n already offers features such as AI Agent, AI Workflow Builder, and Chat Hub. It is not just about plugging models into workflows. It is about organizing multi-step tasks, tool usage, and interaction points into a broader automation system. That makes it a better fit for team workflows and real business operations.

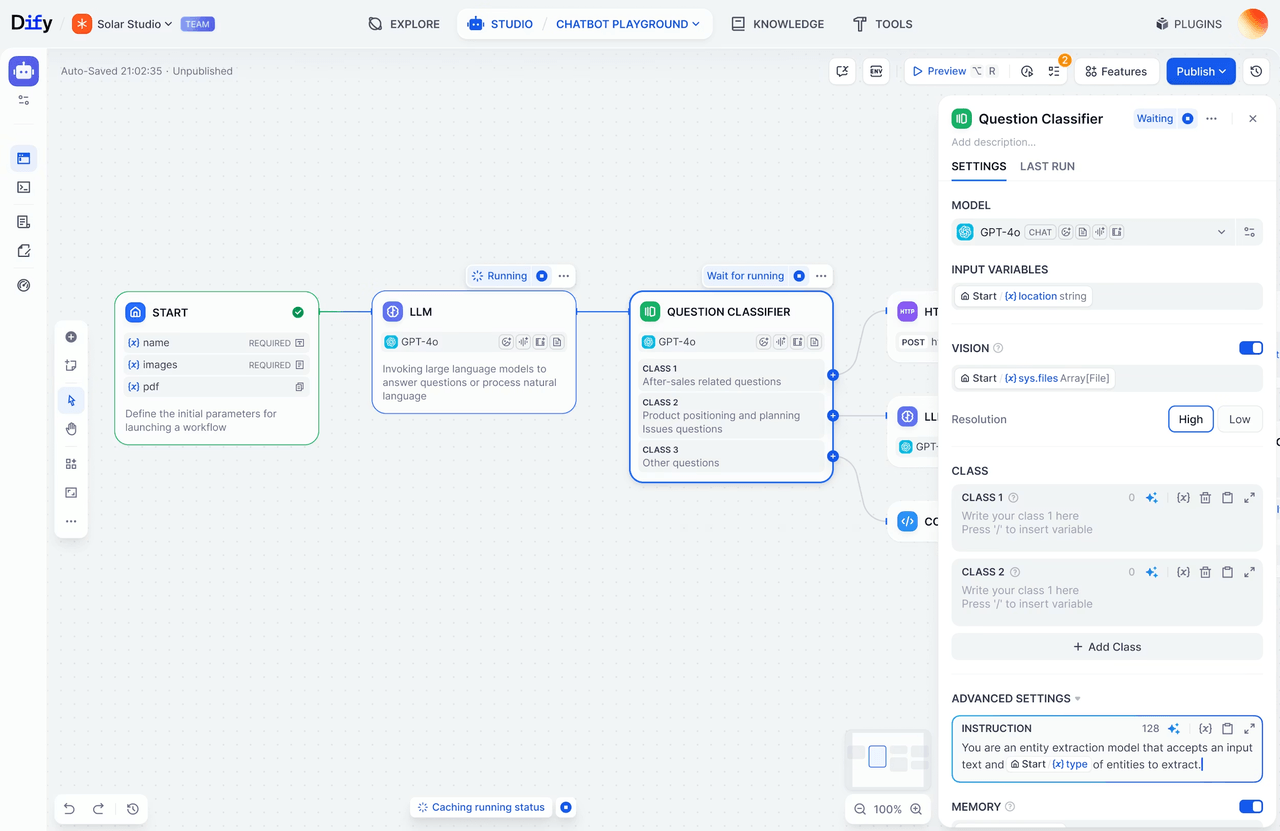

Dify

- GitHub link: https://github.com/langgenius/dify

- Official website: https://dify.ai

- GitHub Stars: 132k

Dify is an open-source platform for building LLM applications. It brings together AI workflows, RAG, agent capabilities, model management, and application observability in one product. Compared with tools focused more narrowly on automation, Dify is closer to the full lifecycle of AI application building, from prototype to production.

Core capabilities

Visual AI workflow design

Dify provides a visual workflow canvas for building and testing AI workflows directly. It also supports a wide range of open-source and closed-source models, including services compatible with the OpenAI API. For developers and teams, that means many AI applications can be prototyped and assembled quickly without rebuilding every layer from scratch.

Integrated support for models, RAG, and observability

Dify includes built-in RAG capabilities, agent tool support, and application logging and analysis. It helps teams not only launch AI applications faster, but also continue debugging, optimizing, and maintaining them after release. In that sense, it acts as a unified platform for both AI app development and ongoing operations.

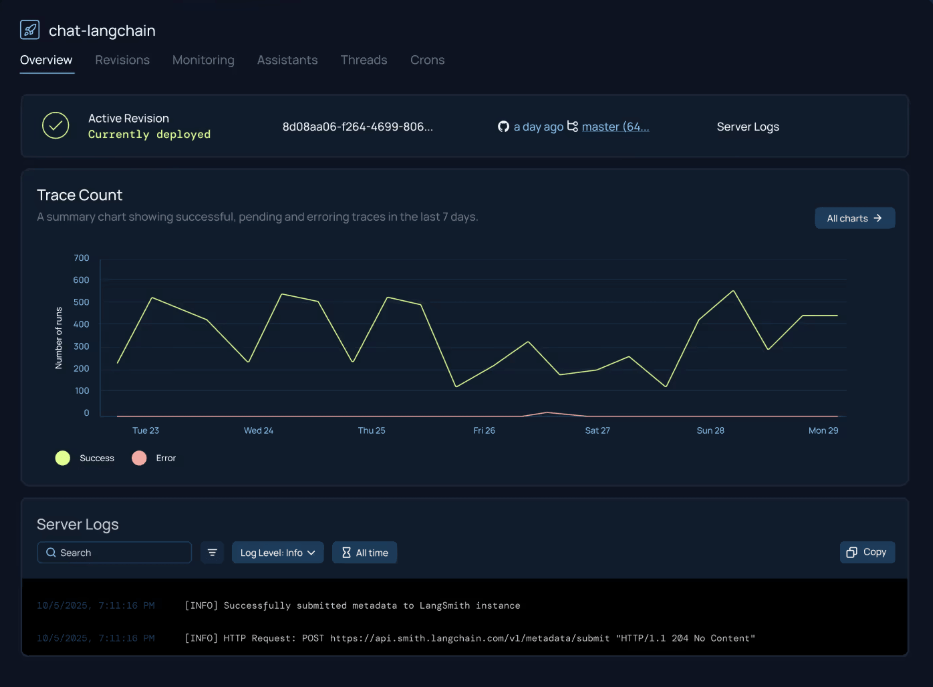

LangChain

- GitHub link: https://github.com/langchain-ai/langchain

- Official website: https://www.langchain.com

- GitHub Stars: 129k

LangChain is an open-source framework for building LLM applications and AI agents. Its core strength lies in connecting models, tools, context, and external integrations. Compared with platforms built around visual workflows, it is more of a developer framework, making it better suited to teams that need more control and deeper customization.

Core capabilities

Composable building blocks for AI application chains

LangChain provides a large set of reusable components and third-party integrations, making it easier to connect models, tools, memory, and external services into a complete chain. For developers, the main advantage is speed and structure. It reduces the need to build every layer from scratch and helps organize applications more efficiently.

A foundation for orchestrating complex agents

LangChain can be used to build agents quickly, and when paired with LangGraph, it can support longer workflows, stateful execution, and more controllable orchestration. Together with supporting tools such as LangSmith and Deep Agents, it is gradually becoming a core framework for AI applications and agent systems.

Data and context

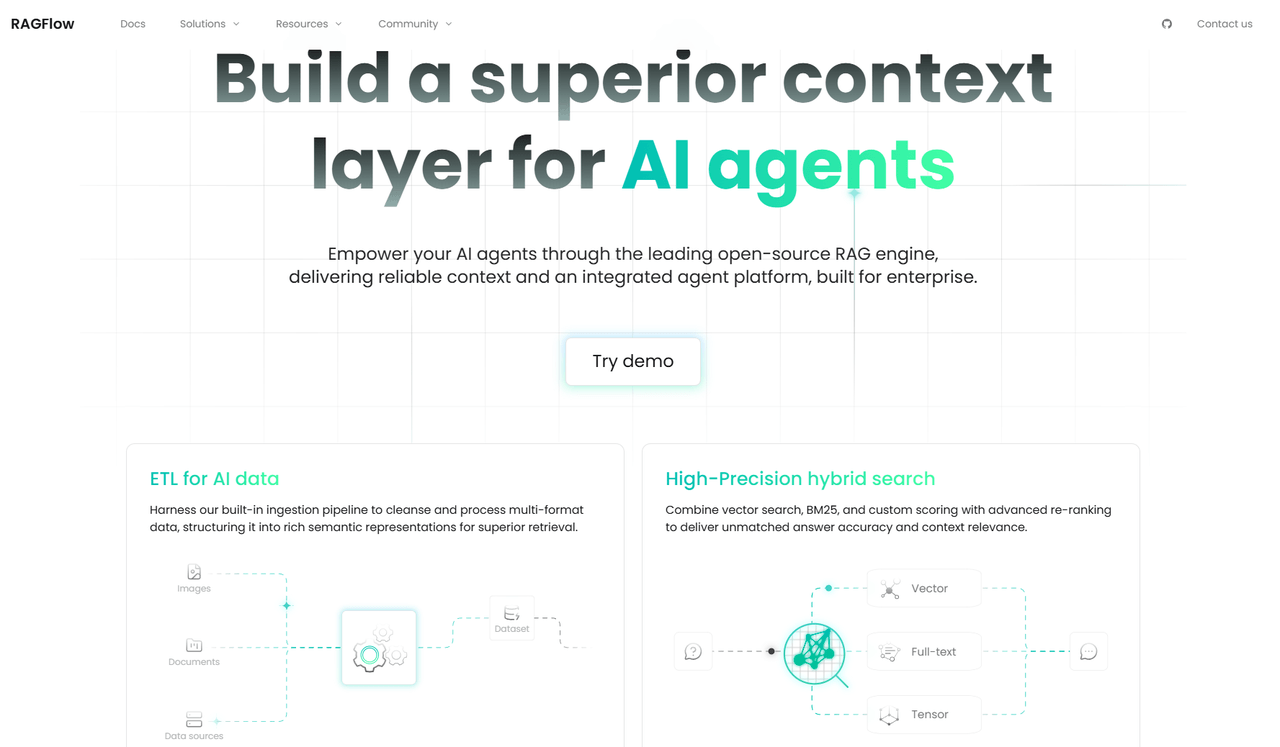

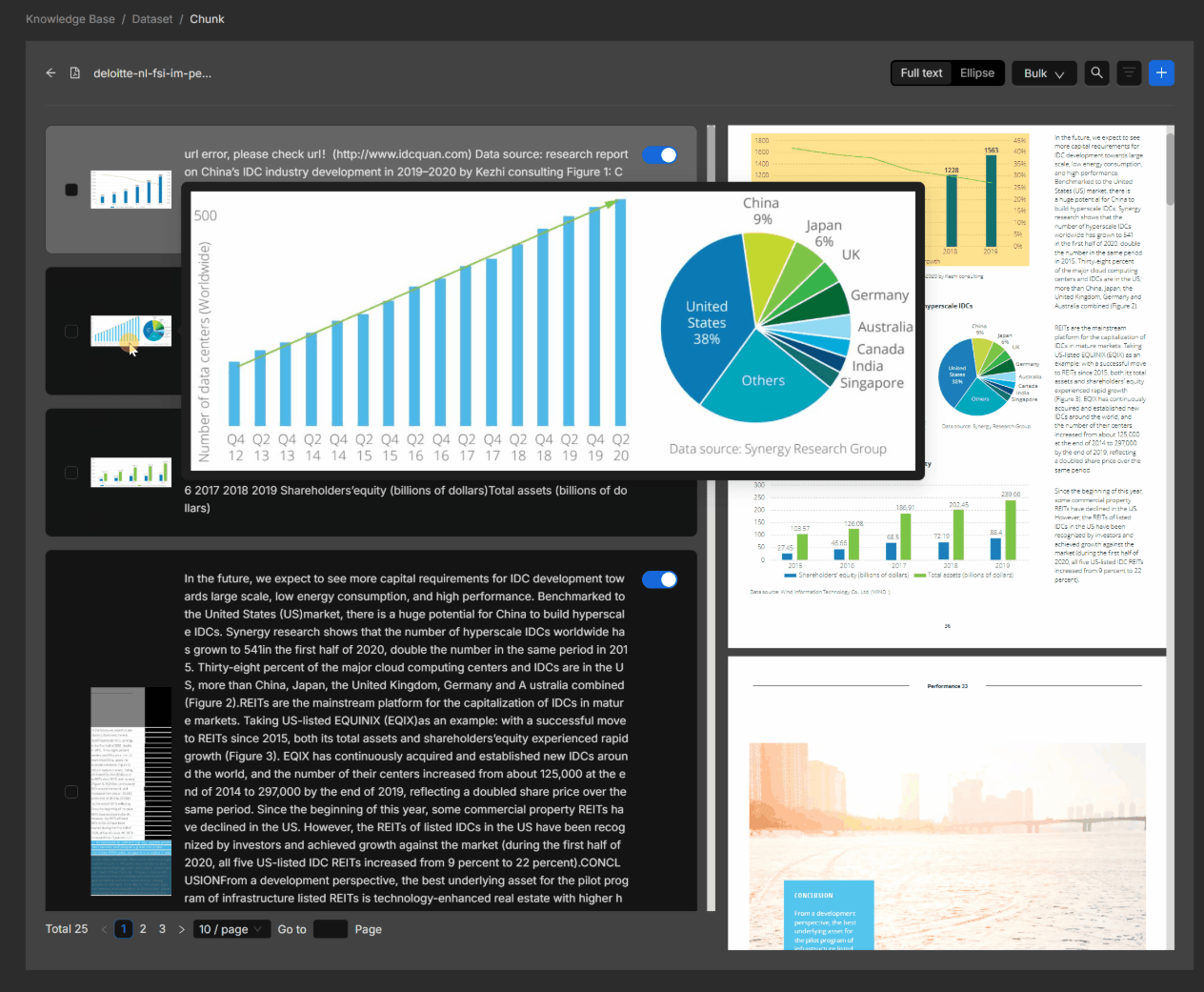

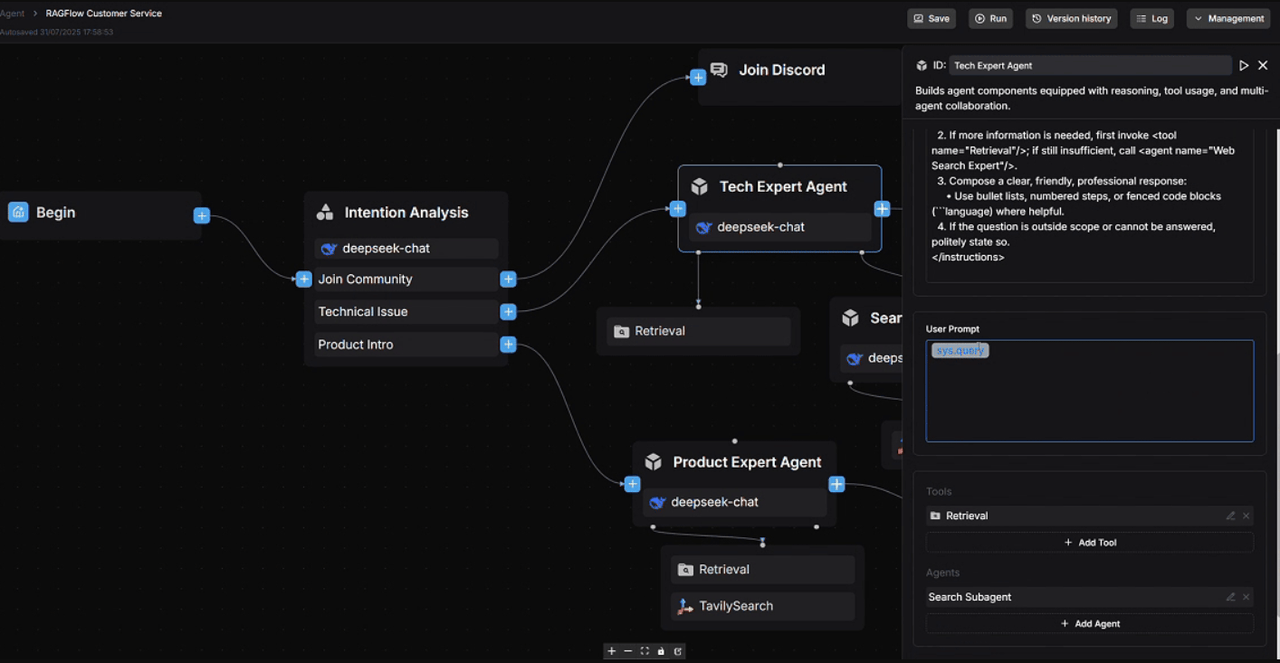

RAGFlow

- GitHub link: https://github.com/infiniflow/ragflow

- Official website: https://ragflow.io

- GitHub Stars: 74.7k

RAGFlow is an open-source RAG engine focused on giving LLMs a more reliable context layer. It brings document parsing, data cleaning, retrieval enhancement, and agent capabilities into a single system.

Core capabilities

Document parsing and data preprocessing

RAGFlow includes built-in data ingestion and processing capabilities. It can clean and parse many kinds of data, then organize them into semantic representations that are easier to retrieve and use. In scenarios with complex documents and fragmented data sources, this matters a great deal because context quality often determines answer quality.

A full RAG pipeline built around context

RAGFlow supports document-aware RAG workflows and helps build more reliable question-answering and citation chains for complex data formats. It also includes agent platform features and orchestratable data flows, with workflow canvases, agent nodes, and related APIs already added. That makes it increasingly suitable for enterprise knowledge systems and more complex application scenarios.

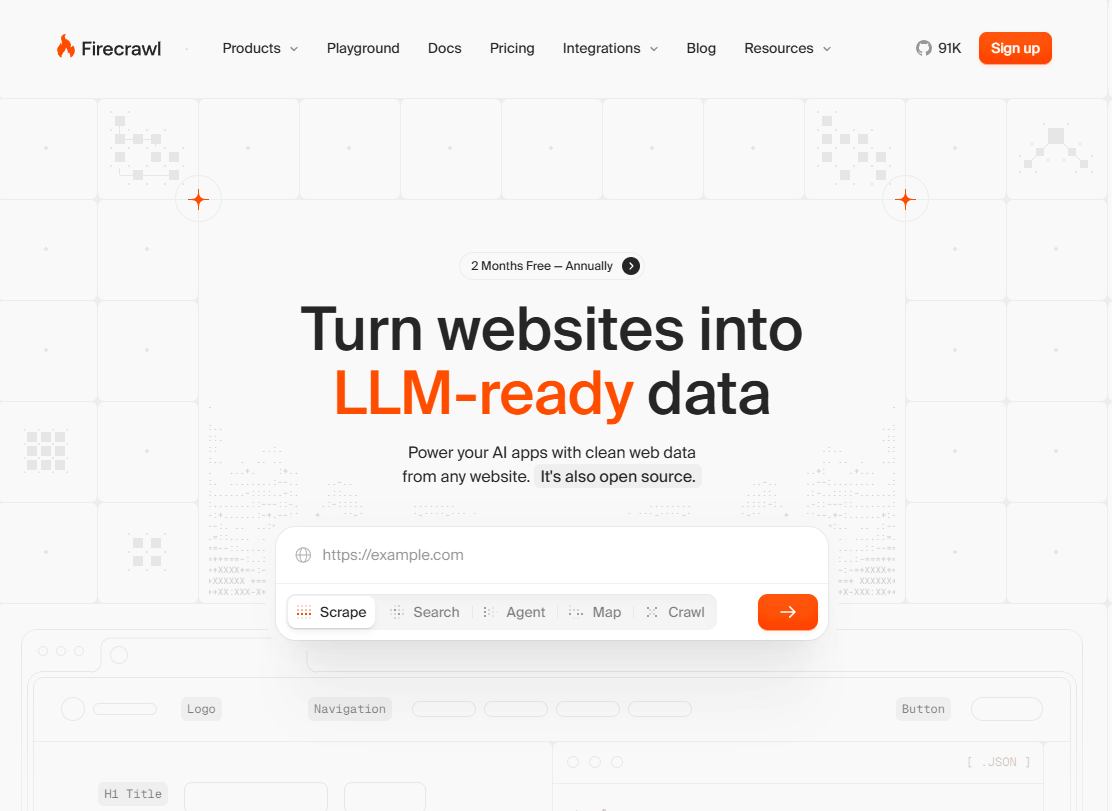

Firecrawl

- GitHub link: https://github.com/firecrawl/firecrawl

- Official website: https://www.firecrawl.dev

- GitHub Stars: 91k

Firecrawl is a web data interface built for AI. Its core function is to crawl and extract website content, then turn it into structured data or Markdown that LLMs can use directly. Unlike traditional web crawlers, it is designed less for collecting raw pages and more for turning websites into usable context for AI applications and agents.

Core capabilities

Web crawling and structured extraction

Firecrawl can crawl, scrape, extract, and search website content, then output it in formats such as Markdown, JSON, links, screenshots, and HTML. For AI applications, the value is not just access to webpage content, but access to data that is already much easier for models to process.

Data ingestion for LLM-powered applications

Firecrawl can turn entire websites into data that is ready for LLM use. It already offers an MCP server, SDKs, and example projects, making it easy to plug into environments such as Cursor, Claude, and other developer tools. It is especially useful for applications that need live website data, external knowledge sources, or stronger retrieval capabilities.

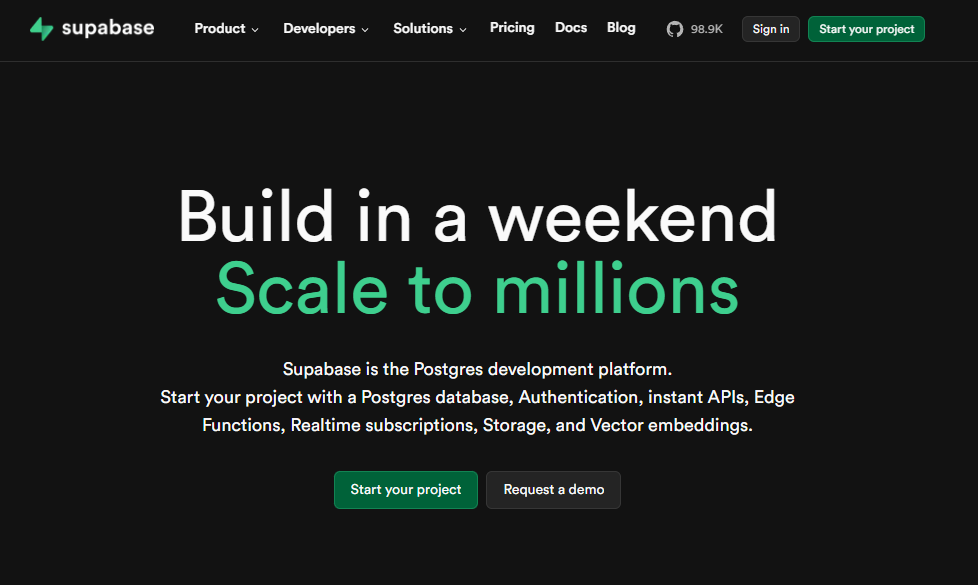

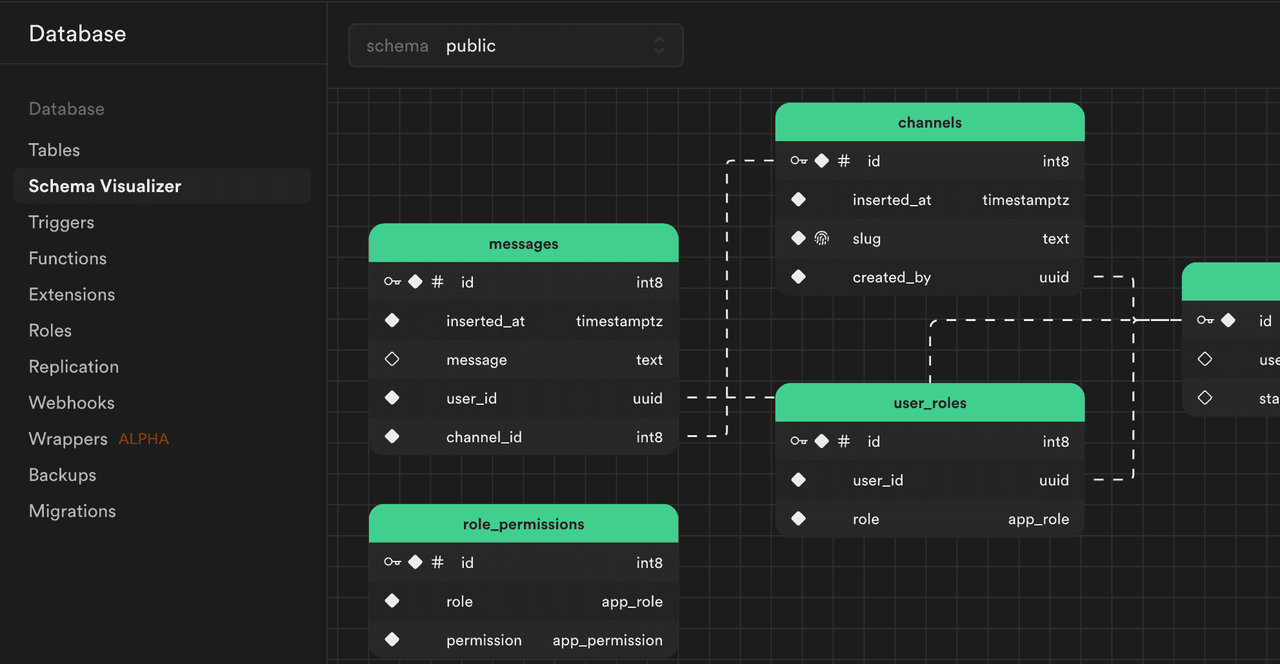

Supabase

- GitHub link: https://github.com/supabase/supabase

- Official website: https://supabase.com

- GitHub Stars: 98.9k

Supabase is a Postgres-based development platform that combines databases, authentication, real-time APIs, Edge Functions, storage, and vector capabilities in a single system. In the context of this article, what makes it especially relevant is not just its role as a backend platform, but the fact that it has made vectors, embeddings, and AI-ready data infrastructure part of its core platform offering.

Core capabilities

An integrated foundation for data and applications

Supabase provides a complete stack that includes Postgres, authentication, APIs, real-time features, Edge Functions, and storage. That makes it a practical foundation for web, mobile, and AI applications. For many teams, this means data, access control, and backend services can all be managed in one place rather than spread across multiple tools.

Built-in vector retrieval and embedding management

Supabase has already integrated AI and vector capabilities directly into the platform. It supports storing, indexing, and querying embeddings with Postgres and pgvector, along with semantic search, keyword search, and hybrid search. Combined with Edge Functions, queues, triggers, and extensions, it can also handle automatic embedding generation, updates, and retries, making it well suited to growing context and retrieval workloads.

Multimodal generation

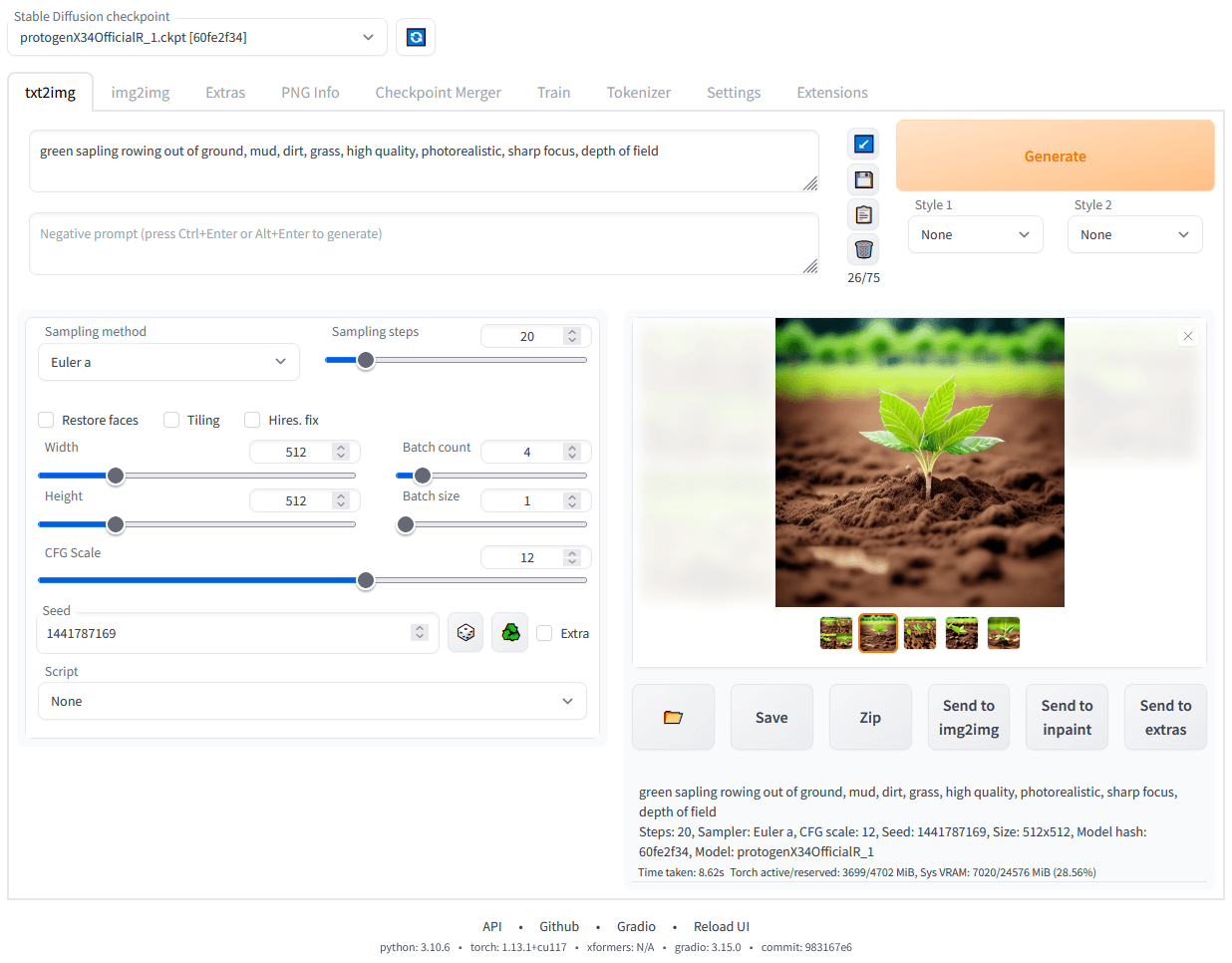

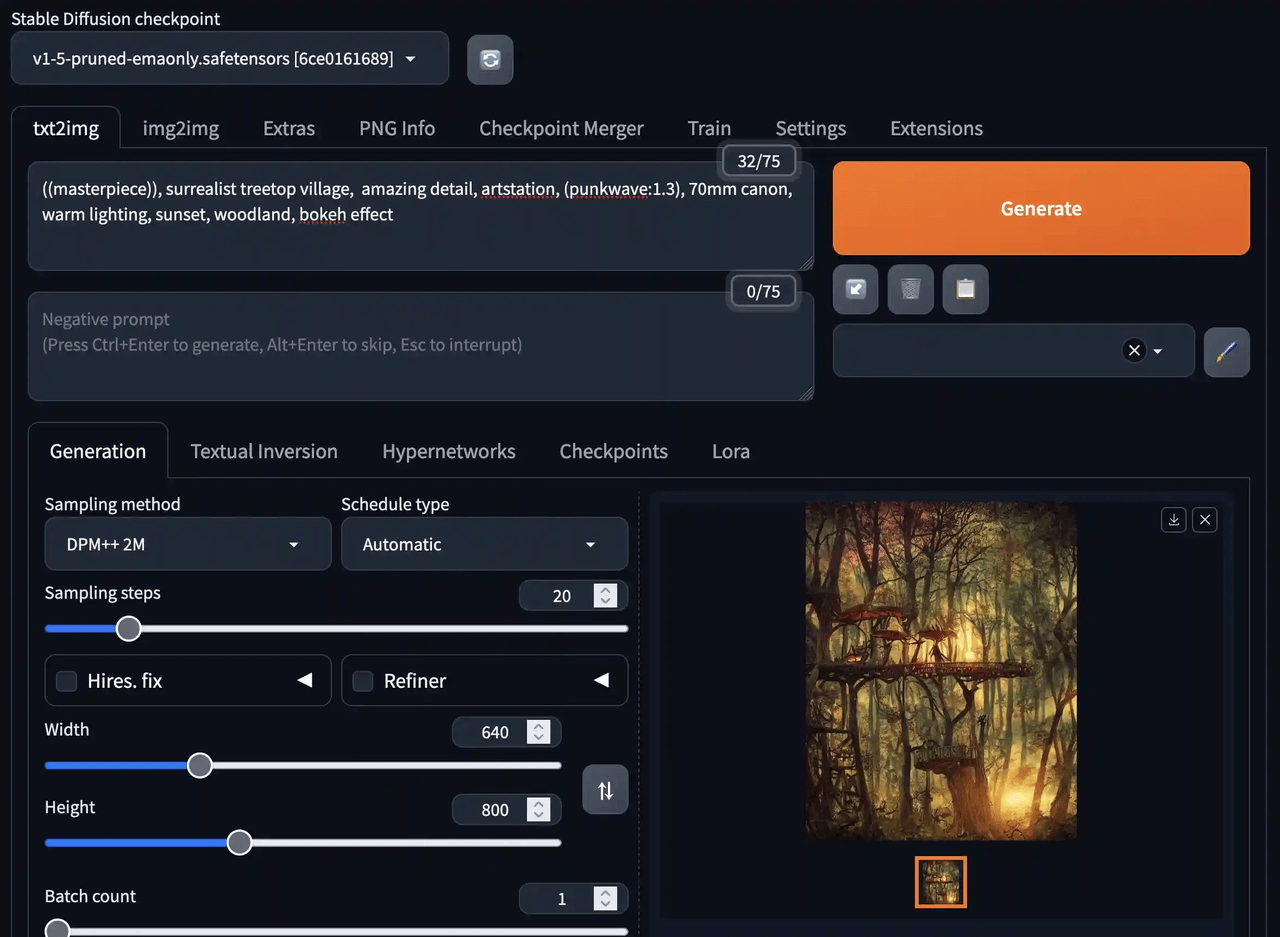

Stable Diffusion WebUI

- GitHub link: https://github.com/AUTOMATIC1111/stable-diffusion-webui

- Official website: No standalone website at the moment

- GitHub Stars: 162k

Stable Diffusion WebUI is a Stable Diffusion web interface built on Gradio. It brings local deployment, parameter control, and image generation into one place. Compared with node-based workflow tools, it feels more like a traditional image generation console and is better suited to detailed work around text-to-image, image-to-image, and model tuning.

Core capabilities

Image generation and editing

Stable Diffusion WebUI supports both text-to-image and image-to-image generation, along with common editing features such as inpainting, outpainting, and upscaling. For users who want to work directly with prompts, reference images, and editing workflows, these features already cover most everyday needs.

Parameter control and local extensibility

Stable Diffusion WebUI offers fine-grained control over sampling methods, prompt weights, batch generation, parameter reuse, and multi-dimensional parameter comparison. It also has a mature local runtime and extension ecosystem, supporting features such as embeddings and textual inversion, with plenty of room for further expansion.

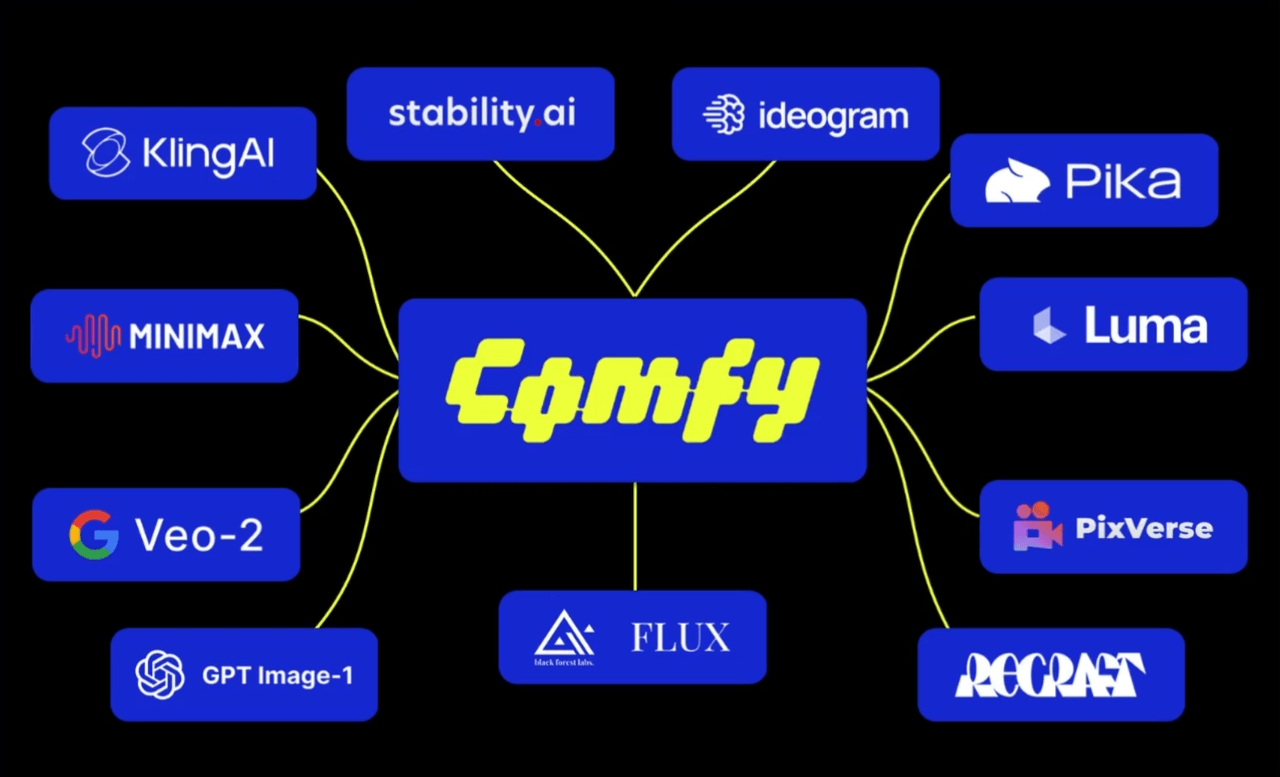

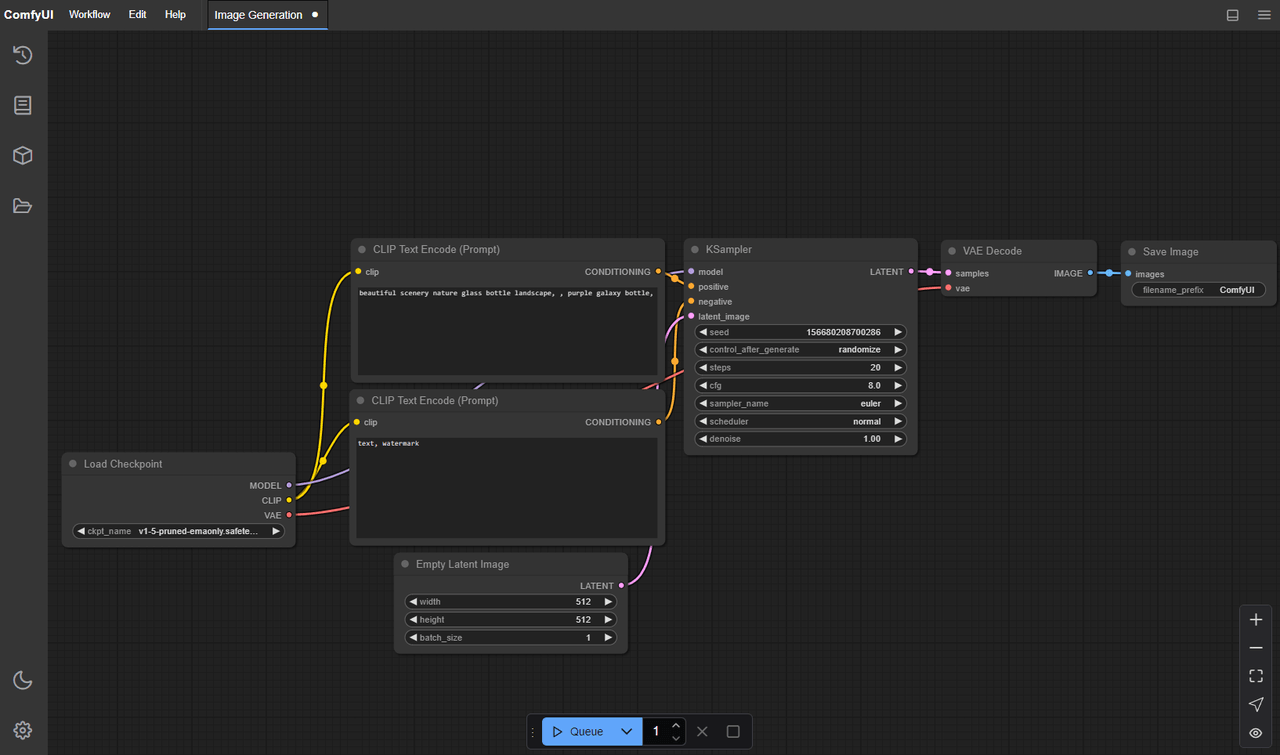

ComfyUI

- GitHub link: https://github.com/Comfy-Org/ComfyUI

- Official website: https://www.comfy.org/

- GitHub Stars: 106k

ComfyUI is a visual workflow tool for generative visual AI. Its core model is based on nodes and flowcharts for designing and running complex Stable Diffusion workflows. Compared with the more console-style experience of Stable Diffusion WebUI, ComfyUI puts greater emphasis on modular composition, workflow reuse, and complex generation pipelines.

Core capabilities

Node-based workflow orchestration for generation

ComfyUI lets users build complex Stable Diffusion workflows through nodes, graphs, and flowchart-style interfaces. Many experiments and combinations can be done without writing code. For users who regularly need to adjust prompts, models, control inputs, and generation steps, this approach is far more flexible than tweaking parameters one run at a time.

Better suited to reusable and extensible generation pipelines

ComfyUI supports multiple image models and generation workflows, and has already expanded into areas such as video, 3D, audio, and broader visual generation tasks. It also provides sample workflows, desktop apps, and complete documentation, making it easier to save, reuse, and extend generation pipelines over time.

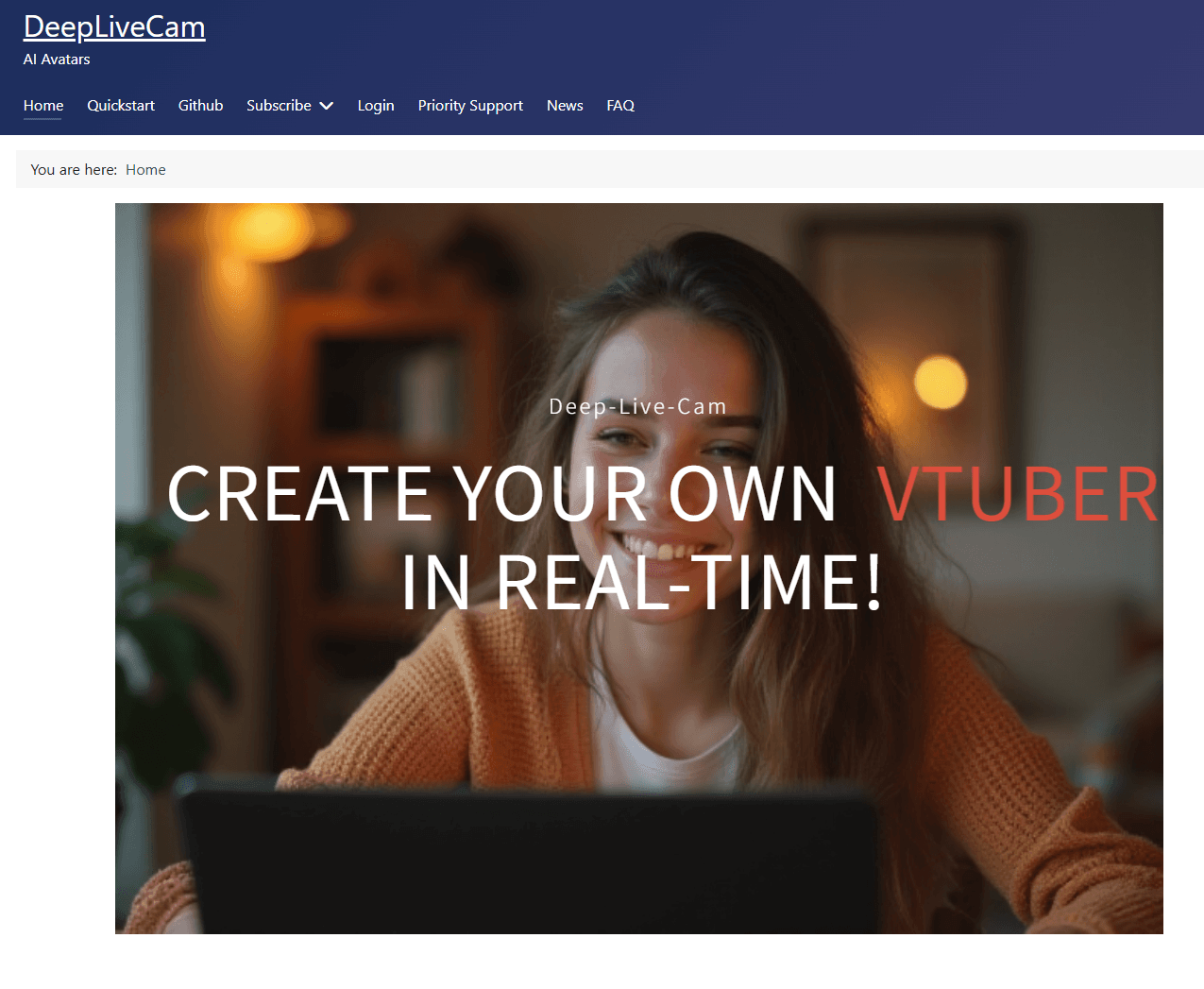

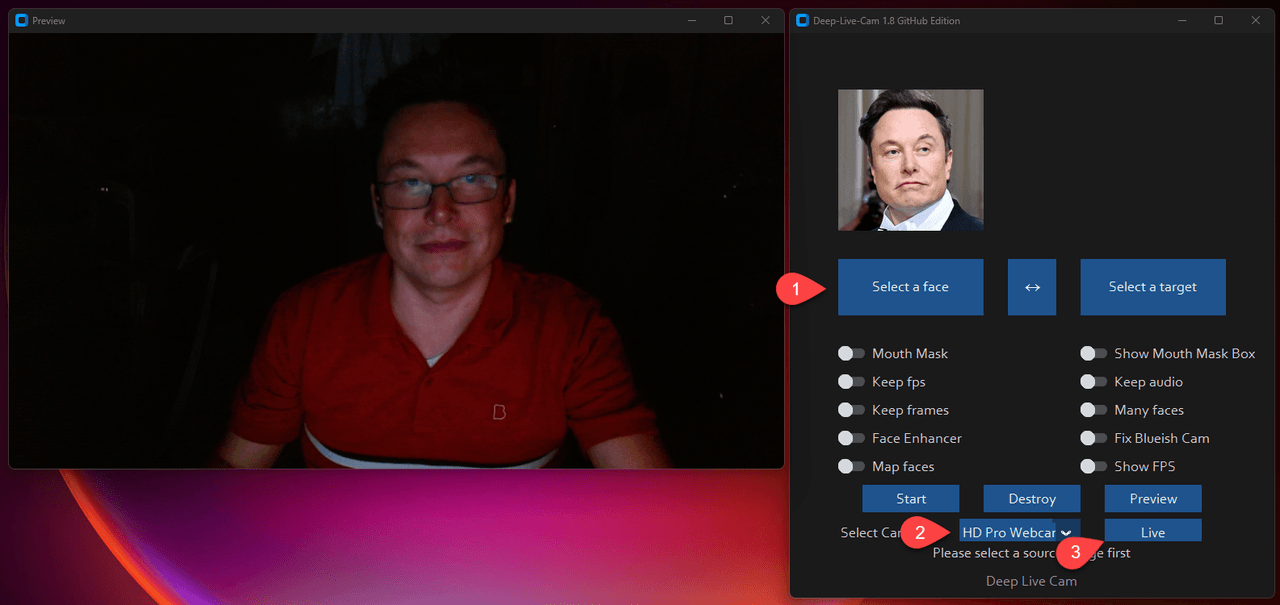

Deep-Live-Cam

- GitHub link: https://github.com/hacksider/Deep-Live-Cam

- Official website: No standalone website at the moment

- GitHub Stars: 80k

Deep-Live-Cam is an open-source project built for real-time video processing. Its main capabilities are real-time face swapping and one-click video transformation. Compared with multimodal tools that focus more on image generation or workflow orchestration, it brings generative AI directly into camera feeds, livestreams, and video processing pipelines, with a strong focus on real-time usability.

Core capabilities

Real-time face replacement for video

Deep-Live-Cam supports both real-time face swapping and one-click video processing, with a clear focus on instant results in video and livestream settings. Instead of stopping at static image generation or post-production editing, it applies generative capabilities directly to video streams.

Camera integration and local deployment

Deep-Live-Cam can work directly with live camera input and can also process existing video files. It also supports local deployment and provides fairly complete installation guidance covering GPUs, inference dependencies, and runtime requirements, making it practical to run on your own hardware.

Conclusion

If OpenClaw helped more people see what AI can do in personal productivity scenarios, then the changes of the past few months make one thing clear: these capabilities are no longer staying at the level of consumer tools alone.

Whether it is regional ecosystems forming around OpenClaw or vendors quickly turning it into packaged products, once popular AI tools enter more complex application environments, they almost always move further into enterprise and industry use cases. And what enterprises need is not just an agent that can chat and call tools. They need a system environment that can connect to data, fit into workflows, enforce permissions, and support collaboration.

If you want to explore how AI can truly be embedded into business systems and real operational workflows, visit the NocoBase website to learn more about our work in AI employees and business system development.

Related reading:

- Best Open Source AI CRM: NocoBase vs Twenty vs Krayin

- Top 3 Open Source ERP with AI on GitHub: NocoBase vs Odoo vs ERPNext

- 5 Most Popular Open-Source AI Project Management Tools on GitHub

- 6 Best Open-Source AI Ticketing Systems

- 4 Open Source Data Management Tools for Business Systems

- 4 Lightweight Enterprise Software for Business Processes (With Real-World Cases)

- 6 Enterprise Softwares to Replace Excel for Internal Operations

- 10 Open Source Tools Developers Use to Reduce Repetitive CRUD